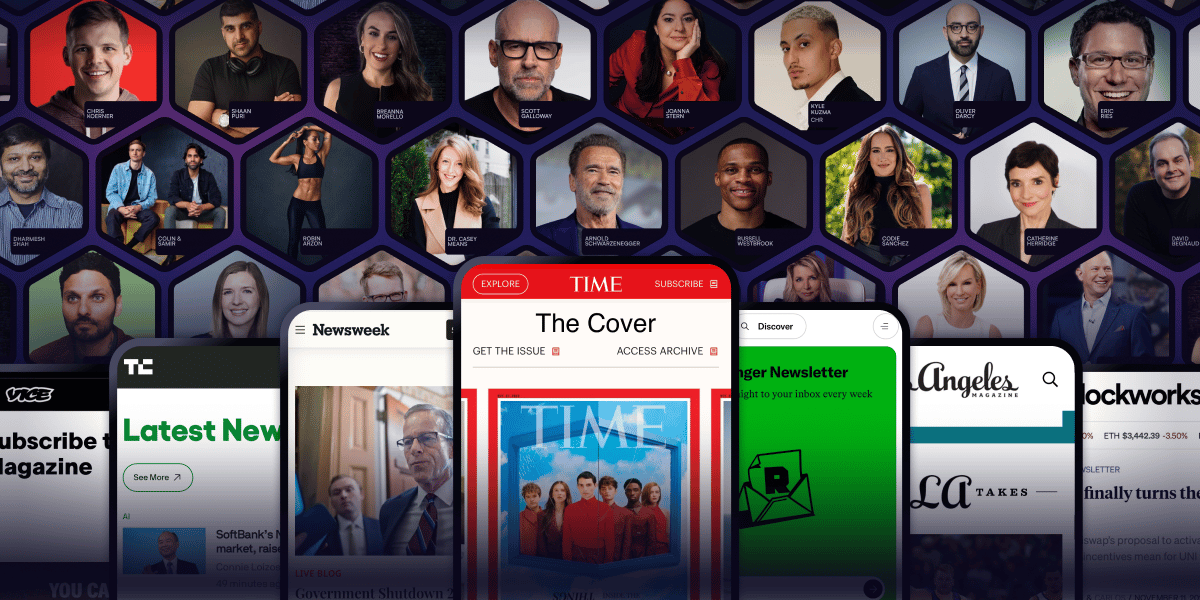

Arnold Schwarzenegger has a newsletter.

Yeah. That Arnold Schwarzenegger.

So do Codie Sanchez, Scott Galloway, Colin & Samir, Shaan Puri, and Jay Shetty. And none of them are doing it for fun. They're doing it because a list you own compounds in ways that social media never will.

beehiiv is where they built it. You can start yours for 30% off your first 3 months with code PLATFORM30. Start building today.

A few years ago, most people thought AI was just chatbots answering homework questions.

Now companies are building machines so powerful they need the electricity of small cities.

This week, something wild happened in the AI world.

Anthropic announced a deal with SpaceX to use the full power of xAI’s massive supercomputer called Colossus 1.

And yes, this is the same xAI owned by Elon Musk.

The news sounds technical at first. But the story behind it is actually very simple.

AI companies are running out of power.

Not emotional power.

Computer power.

And the race to get more of it is starting to look like a sci fi movie.

The Day Claude Started Slowing Down

If you have used Claude recently, you may have seen messages like:

“Rate limit reached.”

Or maybe Claude became slower during busy hours.

Many developers were frustrated.

Some people pay expensive monthly subscriptions to use Claude for coding and research. Then suddenly they hit limits after a few hours.

Imagine paying for Netflix and getting a popup saying:

“Sorry, too many people are watching movies right now.”

That is basically what happened.

The reason is simple.

Claude became too popular.

More people started using AI for coding, writing, business work, and even daily life. Every message sent to Claude needs huge amounts of computing power behind the scenes.

And unlike normal apps, AI does not just store data.

It thinks.

Or at least it simulates thinking by doing billions of calculations every second.

Those calculations happen inside giant buildings full of expensive chips.

These buildings are called data centers.

AI Needs Buildings?

A lot of non tech people think AI lives somewhere magically “in the cloud.”

But the cloud is just someone else’s computer.

Actually thousands of computers.

Very expensive ones.

Imagine opening ChatGPT and asking:

“Write me a business email.”

Your message travels across the internet to a data center.

Inside that building are rows and rows of machines running powerful AI chips. They process your request and send back the answer in seconds.

Now multiply that by millions of users.

That is why companies are desperately building bigger AI infrastructure.

And this is where Colossus enters the story.

Meet Colossus

The name already sounds like a Marvel villain.

And honestly, the machine is massive enough to deserve it.

Colossus 1 is one of the biggest AI supercomputers in the world.

It reportedly uses more than 300 megawatts of power.

That number is hard to imagine, so let’s simplify it.

A single laptop charger uses maybe 60 watts.

Colossus uses hundreds of millions of watts.

Enough electricity to power huge neighborhoods.

Inside this system are some of the world’s most advanced AI chips including H100s, H200s, and GB200s.

You can think of these chips like Formula 1 engines for AI.

Normal computers are bicycles.

These are rocket ships.

Training and running modern AI models like Claude or Grok requires thousands of these chips working together at the same time.

And they are extremely expensive.

Some AI chips cost more than luxury cars.

Why Anthropic Needed Help

Anthropic is one of the biggest AI companies in the world.

Their chatbot Claude is competing directly with ChatGPT and Gemini.

But building good AI is not enough anymore.

You also need enough computing power to serve millions of users every day.

That is becoming the real battle.

For months, users noticed Claude had stricter limits than competitors.

Developers especially complained because coding sessions can eat up lots of AI power.

One long programming conversation might require thousands of AI responses.

That becomes expensive very fast.

Anthropic had a problem.

Demand was growing faster than their infrastructure.

So instead of waiting years to build new systems, they did something surprising.

They partnered with Elon Musk’s side.

Yes, the same Elon Musk who has publicly criticized other AI companies many times.

Tech rivals working together feels weird.

But in the AI world, compute matters more than ego.

The Biggest Win for Claude Users

After the partnership announcement, Anthropic immediately increased limits for Claude users.

This is the part normal users actually care about.

Here’s what changed:

Five hour rate limits were doubled

Peak hour cuts were removed

API limits for Opus models increased

Coding users got more room to work

That means fewer interruptions.

Less waiting.

Less frustration.

For developers, this is huge.

Many people use Claude Code for serious work now.

Some even run entire startups with AI helping them write software.

When the limits disappear, productivity jumps instantly.

Imagine a carpenter suddenly getting unlimited electricity for all their tools.

That is what more compute feels like in AI.

The Real Currency of AI Is No Longer Money

This is the interesting part.

A few years ago, people thought the AI race would be won by whoever had the smartest researchers.

Now it looks like the winner might simply be whoever has the most GPUs and electricity.

AI companies are basically fighting over three things:

Chips

Power

Data centers

That is why companies are spending billions so aggressively.

And everybody wants NVIDIA chips.

Especially the H100.

In tech circles, these chips are treated like rare treasure.

If gold rush miners lived in 2026, they would probably be mining GPUs instead.

Why SpaceX Is Suddenly Involved

At first, the deal sounds random.

Why is a rocket company helping an AI company?

But if you look closely, it actually makes sense.

SpaceX already builds giant infrastructure projects.

They know how to handle power systems, cooling, networking, and industrial scale engineering.

Running massive AI systems is surprisingly similar to running space infrastructure.

Both require insane amounts of electricity and cooling.

Also, Elon Musk’s companies are becoming more connected with each other.

Tesla works on AI.

xAI works on AI.

SpaceX provides infrastructure.

Starlink provides internet.

Everything slowly connects into one ecosystem.

Reports even suggest xAI could merge deeper into a larger “SpaceXAI” structure in the future.

That sounds futuristic now.

But so did reusable rockets once.

The Weird Future of AI Data Centers

One part of the announcement made people especially curious.

There were discussions about orbital data centers.

Yes.

Computers in space.

It sounds ridiculous at first.

But there is logic behind it.

AI systems produce enormous heat.

Cooling them is expensive.

Electricity is expensive too.

Future space based infrastructure could theoretically use solar power directly in orbit.

No weather.

No land limits.

Unlimited sunlight.

Of course, we are still far away from normal space data centers.

But the fact that serious companies are discussing it tells you how extreme AI demand is becoming.

The future internet may not run from buildings alone.

Some of it could literally run above Earth.

Sci fi keeps becoming business plans.

AI Companies Are Starting to Look Like Nations

Something else is changing quietly.

Big AI companies are beginning to resemble countries.

Think about it.

They need:

Massive energy supply

Industrial infrastructure

Global communication networks

Billions of dollars

Strategic partnerships

Advanced manufacturing

This is no longer just “building apps.”

These companies now operate at national scale.

That is why governments are paying attention too.

AI is becoming part software industry and part energy industry.

The next generation of tech billionaires may not be the best coders.

They may simply be the people who control the biggest computing networks.

What This Means for Regular People

Most people will never see a GPU cluster.

They will never walk inside a giant AI data center.

But they will feel the effects.

AI tools will become faster.

Smarter.

More available.

The frustrating limits users complain about today may slowly disappear.

And as compute grows, AI systems can handle bigger tasks.

Longer memory.

Better coding.

More realistic voice and video generation.

Stronger reasoning.

The quality jump between old AI and modern AI already feels huge.

And companies are still pouring insane amounts of money into infrastructure.

Which means the real AI boom may just be getting started.

The Funny Part About All This

Ten years ago, tech companies competed over social media apps.

Now billion dollar companies are fighting over electricity and warehouse sized computers.

Somewhere along the way, Silicon Valley turned into an industrial revolution sequel.

People imagined the future would look clean and minimal.

Instead the future looks like giant noisy buildings full of overheating chips consuming city level power just so someone can ask:

“Can you rewrite this email professionally?”

Technology is funny sometimes.

Final Thoughts

It shows where the industry is heading.

The next AI breakthroughs may not come from smarter algorithms alone.

They may come from whoever can build the biggest machine.

And right now, the companies winning are the ones turning science fiction into infrastructure.

One thing is clear.

AI is no longer just software.

It is power plants, chips, cooling systems, giant warehouses, rockets, and maybe one day computers floating in space.

And somehow all of that exists because millions of people keep asking chatbots to help them write code, emails, homework, and tweets.

The future arrived in a very strange way.

—Sushila